Security News

Axios Maintainer Confirms Social Engineering Attack Behind npm Compromise

Axios compromise traced to social engineering, showing how attacks on maintainers can bypass controls and expose the broader software supply chain.

@aicore/metrics

Advanced tools

Metrics library for all core.ai services. A lightweight, efficient metrics reporting library for JavaScript applications that provides a simple interface to collect and send various metrics to a monitoring service endpoint.

npm install @aicore/metrics

import { init, countEvent, valueEvent } from '@aicore/metrics';

// Initialize the client (do this once when your app starts)

init('my-app', "dev", 'http://metrics.example.com/ingest', 'api-key-123');

// Track count metrics (e.g., page views, clicks, errors)

countEvent('page_view', 'frontend', 'homepage');

countEvent('user', 'action', 'pasteCount', 5);

// Track value metrics (e.g., response times, temperatures, scores)

valueEvent('response_time', 'api', 'get_user', 135); // 135ms

Initializes the monitoring client. This function must be called before using any other monitoring functions. The client can only be initialized once per application lifecycle.

serviceName [string][1] Unique name of the service being monitoredstage [string][1] The stage of the service. Eg: dev, prod, staging, etc..metricsEndpointDomain [string][1] Domain where metrics will be sent. Eg. metrics.your-cloud.ioapiKey [string][1] Authentication key for the metrics service// Initialize the monitoring client

default('my-app', "dev", 'http://metrics.example.com/ingest', 'api-key-123');

Records a metric event with the specified parameters. The client must be initialized with init() before calling this function. Metrics are batched and sent at regular intervals.

category [string][1] Primary category for the metricsubCategory [string][1] Secondary category for the metriclabel [string][1] label for additional classificationcount [number][3] Value to increment the metric by, defaults to 1 (optional, default 1)// Record a single event

countEvent('page_view', 'frontend', 'homepage');

// Record multiple events

countEvent('downloads', 'content', 'pdf', 5);

Returns [boolean][4] true if the metric was recorded, false if it was dropped

Records a value metric with the specified parameters. Unlike countEvent, valueEvent records each value with its own timestamp without aggregation. The client must be initialized with init() before calling this function. Limited to MAX_VALUES_PER_INTERVAL values per metric key per interval.

category [string][1] Primary category for the metricsubCategory [string][1] Secondary category for the metriclabel [string][1] label for additional classificationvalue ([number][3] | [string][1]) The value to record// Record a temperature value

valueEvent('temperature', 'sensors', 'outdoor', 22.5);

Returns [boolean][4] true if the metric was recorded, false if it was dropped

Manually sends all collected metrics immediately without waiting for the interval. This is useful for sending metrics before application shutdown or when immediate reporting is needed. The client must be initialized with init() before calling this function.

// Send all collected metrics immediately

await flush();

console.log('All metrics have been sent');

Returns [Promise][5] A promise that resolves when the metrics have been sent, or rejects if sending fails

The metrics library includes built-in system monitoring capabilities that automatically track essential system metrics when initialized.

The following metrics are automatically collected:

You can use the SYSTEM_CATEGORY constant to tag your own metrics related to system resources for a specific host.

This ensures metrics are properly attributed to the specific host:

import { valueEvent, SYSTEM_CATEGORY } from '@aicore/metrics';

// Record custom system-related metrics with proper host attribution

valueEvent(SYSTEM_CATEGORY, 'custom', 'my_metric', 42.5);

All system metrics are automatically tagged with host information for proper identification in your monitoring dashboards.

For advanced use cases, you can directly use the MonitoringClient class:

import { MonitoringClient } from '@core/monitoring-client';

const client = new MonitoringClient('my-service', 'prod', 'http://metrics.example.com/ingest', 'api-key-123');

// Send custom metrics directly

const metrics = [

`my-service{metric="custom_metric",category="test",subCategory="example"} 1 ${Date.now()}`

];

client.sendMetrics(metrics)

.then(result => console.log('Metrics sent successfully', result))

.catch(error => console.error('Failed to send metrics', error));

This library has been optimized to work with QuestDB as the backend time-series database, providing high-performance metrics storage and querying capabilities.

The recommended production setup uses NGINX as a reverse proxy for authentication, SSL termination, and rate limiting:

Client → NGINX (SSL + Auth) → QuestDB → Grafana (Visualization)

Benefits of NGINX Integration:

When using the complete monitoring stack, metrics are sent through NGINX:

// Initialize with NGINX proxy endpoint

default('my-service', 'prod', 'https://metrics.yourdomain.com/ingest', 'your-bearer-token');

Authentication: Uses Bearer token authentication configured during stack installation. The token is automatically generated and configured in NGINX for the /ingest endpoint.

For detailed configuration and operational guidance, refer to the comprehensive documentation included with the monitoring stack:

These guides provide production-ready configurations and best practices for enterprise deployments.

IMPORTANT: Before using this library with QuestDB, you must create the optimized metrics table schema to prevent performance issues from auto-creation with default settings.

QuestDB automatically creates tables when receiving InfluxDB Line Protocol data, but uses default SYMBOL capacities of only 256, which causes performance degradation as your metrics scale. Creating the schema beforehand ensures optimal performance.

If you're using the complete QuestDB monitoring stack, the schema is automatically created during installation:

# Complete monitoring stack with optimized schema

git clone https://github.com/aicore/quest.git && cd quest/

sudo ./setup-production.sh --ssl # For HTTPS with Let's Encrypt

# OR

sudo ./setup-production.sh # For HTTP only

For existing QuestDB installations, create the schema manually before sending any metrics:

# Execute in QuestDB (via web interface at http://questdb:9000 or curl)

curl -G "http://questdb:9000/exec" --data-urlencode "query=CREATE TABLE IF NOT EXISTS metrics (

timestamp TIMESTAMP,

service SYMBOL CAPACITY 50000,

stage SYMBOL CAPACITY 1000,

metric_type SYMBOL CAPACITY 1000,

category SYMBOL CAPACITY 10000,

subcategory SYMBOL CAPACITY 10000,

label VARCHAR,

host SYMBOL CAPACITY 100000,

value DOUBLE

) timestamp(timestamp) PARTITION BY DAY WAL;"

Metrics are automatically converted to InfluxDB Line Protocol format and stored in QuestDB with the following optimized schema:

CREATE TABLE metrics (

timestamp TIMESTAMP,

service SYMBOL CAPACITY 50000, -- Service name (fast filtering)

stage SYMBOL CAPACITY 1000, -- Environment: dev/staging/prod

metric_type SYMBOL CAPACITY 1000, -- count/value (fast filtering)

category SYMBOL CAPACITY 10000, -- Metric categories (optimized filtering)

subcategory SYMBOL CAPACITY 10000, -- Metric subcategories (optimized filtering)

label VARCHAR, -- Labels (unlimited cardinality)

host SYMBOL CAPACITY 100000, -- Hostnames (optimized for containers/hosts)

value DOUBLE

) timestamp(timestamp) PARTITION BY DAY WAL;

The single-table design enables powerful Grafana dashboards and alerting with simple SQL queries.

-- Response time trends by service

SELECT timestamp, service, avg(value) as response_time

FROM metrics

WHERE category = 'response_time'

AND metric_type = 'value'

AND $__timeFilter(timestamp)

GROUP BY timestamp, service

ORDER BY timestamp;

-- Request rate by service

SELECT timestamp, service, sum(value) as requests

FROM metrics

WHERE category = 'http_request'

AND metric_type = 'count'

AND $__timeFilter(timestamp)

GROUP BY timestamp, service

ORDER BY timestamp;

-- CPU usage by host

SELECT timestamp, host, avg(value) as cpu_percent

FROM metrics

WHERE category = 'system'

AND subcategory = 'cpu'

AND label = 'usage_percent'

AND $__timeFilter(timestamp)

GROUP BY timestamp, host

ORDER BY timestamp;

-- Memory usage trends

SELECT timestamp, host, avg(value) as memory_percent

FROM metrics

WHERE category = 'system'

AND subcategory = 'memory'

AND label = 'usage_percent'

AND $__timeFilter(timestamp)

GROUP BY timestamp, host

ORDER BY timestamp;

-- Service selector

SELECT DISTINCT service FROM metrics WHERE $__timeFilter(timestamp);

-- Environment selector

SELECT DISTINCT stage FROM metrics WHERE $__timeFilter(timestamp);

-- Host selector

SELECT DISTINCT host FROM metrics WHERE $__timeFilter(timestamp);

SELECT service, avg(value) as error_rate

FROM metrics

WHERE category = 'error_rate'

AND timestamp > now() - 5m

GROUP BY service

HAVING avg(value) > 5; -- Alert if > 5% error rate

SELECT service, subcategory, avg(value) as avg_response_time

FROM metrics

WHERE category = 'response_time'

AND timestamp > now() - 5m

GROUP BY service, subcategory

HAVING avg(value) > 1000; -- Alert if > 1000ms

-- Alert when no metrics received (service down)

SELECT service, count(*) as metric_count

FROM metrics

WHERE timestamp > now() - 5m

GROUP BY service

HAVING count(*) = 0;

CRITICAL: The optimized QuestDB schema must be created before sending any metrics data. See the Required Schema Setup section above. Using the library without proper schema setup will result in:

# Clone the repository

git clone https://github.com/aicore/metrics.git

cd metrics

# Install dependencies

npm install

# Run unit tests

npm run test:unit

# Run integration tests (requires environment variables)

export METRICS_ENDPOINT=http://your-metrics-server/ingest

export METRICS_API_KEY=your-api-key

npm run test:integ

# Run all tests

npm run test

# Run unit tests with coverage

npm run cover:unit

# Full build process including tests, docs, and vulnerability check

npm run build

# Generate JS documentation only

npm run createJSDocs

Since this is a pure JS template project, build command just runs test with coverage.

> npm install // do this only once.

> npm run build

To lint the files in the project, run the following command:

> npm run lint

To Automatically fix lint errors:

> npm run lint:fix

To run all tests:

> npm run test

Hello world Tests

✔ should return Hello World

#indexOf()

✔ should return -1 when the value is not present

Additionally, to run unit/integration tests only, use the commands:

> npm run test:unit

> npm run test:integ

To run all tests with coverage:

> npm run cover

Hello world Tests

✔ should return Hello World

#indexOf()

✔ should return -1 when the value is not present

2 passing (6ms)

----------|---------|----------|---------|---------|-------------------

File | % Stmts | % Branch | % Funcs | % Lines | Uncovered Line #s

----------|---------|----------|---------|---------|-------------------

All files | 100 | 100 | 100 | 100 |

index.js | 100 | 100 | 100 | 100 |

----------|---------|----------|---------|---------|-------------------

=============================== Coverage summary ===============================

Statements : 100% ( 5/5 )

Branches : 100% ( 2/2 )

Functions : 100% ( 1/1 )

Lines : 100% ( 5/5 )

================================================================================

Detailed unit test coverage report: file:///template-nodejs/coverage-unit/index.html

Detailed integration test coverage report: file:///template-nodejs/coverage-integration/index.html

After running coverage, detailed reports can be found in the coverage folder listed in the output of coverage command. Open the file in browser to view detailed reports.

To run unit/integration tests only with coverage

> npm run cover:unit

> npm run cover:integ

Sample coverage report:

Unit and integration test coverage settings can be updated by configs .nycrc.unit.json and .nycrc.integration.json.

See https://github.com/istanbuljs/nyc for config options.

Please run npm run release on the main branch and push the changes to main. The release command will bump the npm version.

!NB: NPM publish will faill if there is another release with the same version.

To publish a package to npm, push contents to npm branch in

this repository.

@aicore/package*If you are looking to publish to package owned by core.ai, you will need access to the GitHub Organization secret NPM_TOKEN.

For repos managed by aicore org in GitHub, Please contact your Admin to get access to core.ai's NPM tokens.

Alternatively, if you want to publish the package to your own npm account, please follow these docs:

To edit the publishing workflow, please see file: .github/workflows/npm-publish.yml

We use Rennovate for dependency updates: https://blog.logrocket.com/renovate-dependency-updates-on-steroids/

Several automated workflows that check code integrity are integrated into this template. These include:

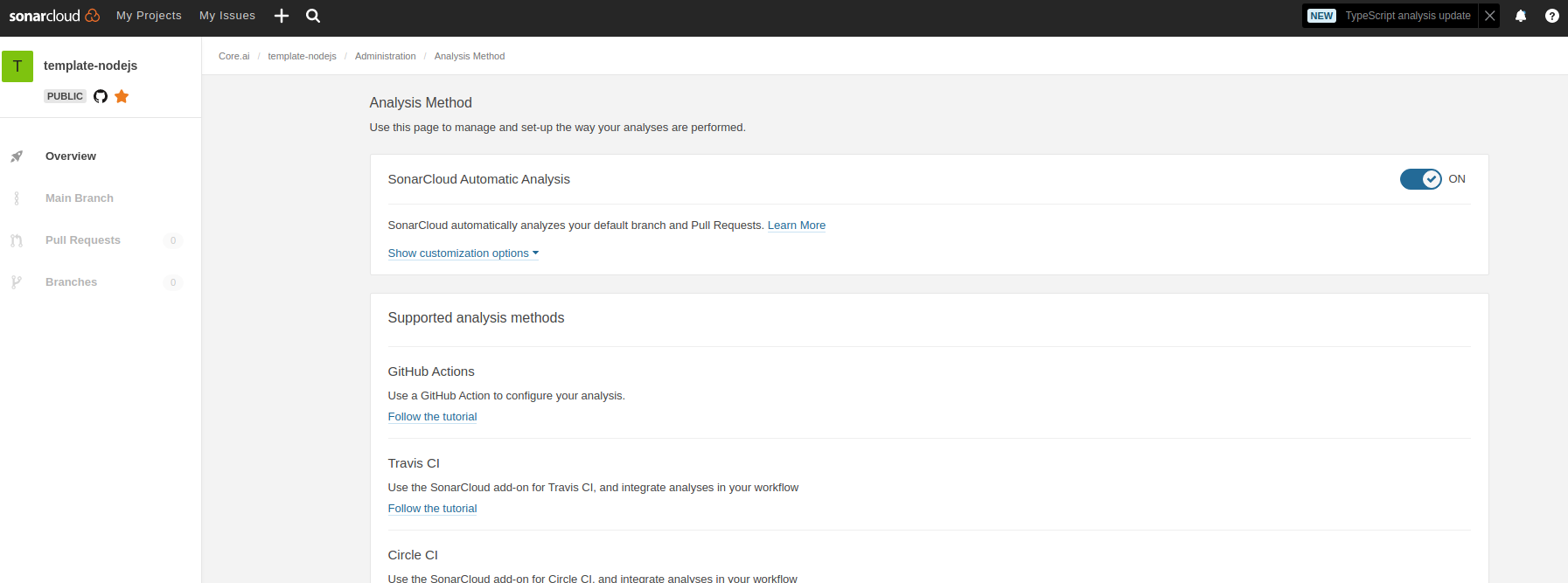

.sonarcloud.properties

Administration Analysis Method for the first time

SonarLint is currently available as a free plugin for jetbrains, eclipse, vscode and visual studio IDEs. Use sonarLint plugin for webstorm or any of the available IDEs from this link before raising a pull request: https://www.sonarlint.org/ .

SonarLint static code analysis checker is not yet available as a Brackets extension.

See https://mochajs.org/#getting-started on how to write tests Use chai for BDD style assertions (expect, should etc..). See move here: https://www.chaijs.com/guide/styles/#expect

Since it is not that straight forward to mock es6 module imports, use the follow pull request as reference to mock imported libs:

setup-mocks.js as the first import of all files in tests.if you want to mock/spy on fn() for unit tests, use sinon. refer docs: https://sinonjs.org/

we use c8 for coverage https://github.com/bcoe/c8. Its reporting is based on nyc, so detailed docs can be found here: https://github.com/istanbuljs/nyc ; We didn't use nyc as it do not yet have ES module support see: https://github.com/digitalbazaar/bedrock-test/issues/16 . c8 is drop replacement for nyc coverage reporting tool

FAQs

Metrics library for all core.ai services

We found that @aicore/metrics demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 3 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Axios compromise traced to social engineering, showing how attacks on maintainers can bypass controls and expose the broader software supply chain.

Security News

Node.js has paused its bug bounty program after funding ended, removing payouts for vulnerability reports but keeping its security process unchanged.

Security News

The Axios compromise shows how time-dependent dependency resolution makes exposure harder to detect and contain.