Company News

Socket Named to Rising in Cyber 2026 List of Top Cybersecurity Startups

Socket was named to the Rising in Cyber 2026 list, recognizing 30 private cybersecurity startups selected by CISOs and security executives.

@llm-dev-ops/llm-auto-optimizer

Advanced tools

LLM Auto Optimizer - Intelligent cost optimization for LLM applications

Production-ready CLI tool for intelligent cost optimization of LLM applications.

npm install -g @llm-dev-ops/optimizer

Or use with npx:

npx @llm-dev-ops/optimizer --help

Initialize the CLI:

llm-optimizer init --api-url http://your-api-url

Create an optimization:

llm-optimizer optimize create \

--service my-llm-service \

--strategy cost-performance-scoring

View metrics:

llm-optimizer metrics get --service my-llm-service

llm-optimizer service start - Start the optimizer servicellm-optimizer service stop - Stop the servicellm-optimizer service status - Check service statusllm-optimizer optimize create - Create new optimizationllm-optimizer optimize list - List optimizationsllm-optimizer optimize get <id> - Get optimization detailsllm-optimizer optimize deploy <id> - Deploy optimizationllm-optimizer optimize rollback <id> - Rollback optimizationllm-optimizer metrics get - Get metrics for a servicellm-optimizer metrics cost - Get cost analyticsllm-optimizer metrics performance - Get performance metricsllm-optimizer metrics quality - Get quality metricsllm-optimizer config show - Show current configurationllm-optimizer config set <key> <value> - Set configuration valuellm-optimizer config list - List all configuration optionsllm-optimizer integration add - Add new integrationllm-optimizer integration list - List integrationsllm-optimizer integration test <name> - Test integrationllm-optimizer admin health - Check system healthllm-optimizer admin users - Manage usersllm-optimizer admin backup - Backup system datallm-optimizer init - Initialize CLI configurationllm-optimizer doctor - Run system diagnosticsllm-optimizer interactive - Start interactive modellm-optimizer completions <shell> - Generate shell completionsYou can also use the CLI programmatically in your Node.js applications:

const llmOptimizer = require('@llm-dev-ops/optimizer');

// Execute command synchronously

const result = llmOptimizer.execSync(['metrics', 'get', '--service', 'my-service']);

console.log(result.stdout);

// Spawn command asynchronously

const child = llmOptimizer.spawn(['optimize', 'create', '--service', 'my-service'], {

stdio: 'inherit'

});

LLM_OPTIMIZER_API_URL - API base URLLLM_OPTIMIZER_API_KEY - API key for authenticationThe CLI looks for configuration in:

~/.config/llm-optimizer/config.yaml (Linux/macOS)%APPDATA%\llm-optimizer\config.yaml (Windows)Example configuration:

api_url: http://localhost:8080

api_key: your-api-key

output_format: table

verbose: false

timeout: 30

For full documentation, visit: https://github.com/globalbusinessadvisors/llm-auto-optimizer

Apache-2.0

FAQs

LLM Auto Optimizer - Intelligent cost optimization for LLM applications

We found that @llm-dev-ops/llm-auto-optimizer demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Company News

Socket was named to the Rising in Cyber 2026 list, recognizing 30 private cybersecurity startups selected by CISOs and security executives.

Research

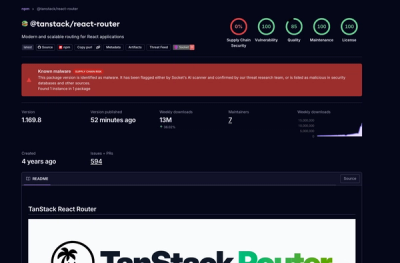

Socket detected 84 compromised TanStack npm package artifacts modified with suspected CI credential-stealing malware.

Security News

A dispute over fsnotify maintainer access set off supply chain alarms around one of Go’s most widely used filesystem libraries.